Avid readers of our INRIX blogs will already be aware of our Innovation Week in November 2025, for those who aren’t I recommend reading Driving the Future: INRIX Celebrates Its Fourth Annual Innovation Week and From Data to Purpose: Reimagining Commute Insights at INRIX Innovation Week.

The blog, 25 Years of Traffic Tech: From Radio Reports to AI Insights, also shares the same theme that explores more of the cutting-edge AI in which INRIX has a rich history. I am pleased that this was voted the “Most Innovative” project by the judging team, and a lot of the credit falls on the fantastically talented team of engineers, project managers and planners (Leo Zhou, Rohan Raghavan, David Moseley, Graham Bruce) that I was lucky enough to work with.

Decoding Traffic Patterns

We also have a long history of reporting congestion. Radio stations, logistic companies, and an incalculable number of regular travelers around the world rely on our understanding to avoid getting stuck in traffic. We have built both a team of experts and a technology stack that makes this demanding job easier. This enabled us to generate a huge archive representing the changes in traffic flow as congestion hits.

You may already be familiar from our IQ traffic maps how abnormal flow is represented as ever more intense colours on the road. Watching the changes as they happen across the network, you can follow and learn to predict the level of impact. Minor slowdown on more remote roads won’t have a significant impact, whereas those with big changes in a dense urban network, or major freeways, could cause the kind of chaos that lasts for hours.

The experts we have at INRIX use this kind of view to add context to our reports, estimating the impact and duration. But they can’t cover every incident, so I assembled a small team to try and help them out.

Large Language Models (LLMs) are Good, but not Great

AI is transforming the world, how we work, how we learn and how we digest information. The computational unit behind this is transformer architecture that encodes the relationship between words, the simple act of predicting the next word from the preceding context has changed the game. The best models are now on par with the smartest of human minds on a diverse range of subjects.

However, while these LLMs are fantastic at lots of things, they are not great at interpreting traffic. That’s partly because unlike text, traffic is the consequence of multiple dimensions (the traffic on one road is influenced by all the roads that feed both in and out) these changes are also temporal. Flow changes over time, as the density of vehicles builds up and dissipates due to the (in)ability of cars to move forward. Encoding the context in a way that a transformer can make sense of is a real challenge, and something I have been thinking about as I focus on my day job highlighted in this blog, The Need for Traffic Foundation Models.

There are some fantastic advantages to an LLM that can make sense of incidents. Being able to query the potential impact and duration of the issue in natural language means we can unlock a diverse range of products suitable for co-pilots, voice-assistants, and automated report generation.

The Secret Sauce

Given the challenges, how did we solve the problem? Well, the first part was an embedding issue. We treated the road network as an interconnected graph, where the roads-segments are linked together at intersections. This representation, where each road-segment, with their own unique traits, are encoded by a particular dictionary of symbols each with their own properties, starts to resemble other graph structures. Namely molecules.

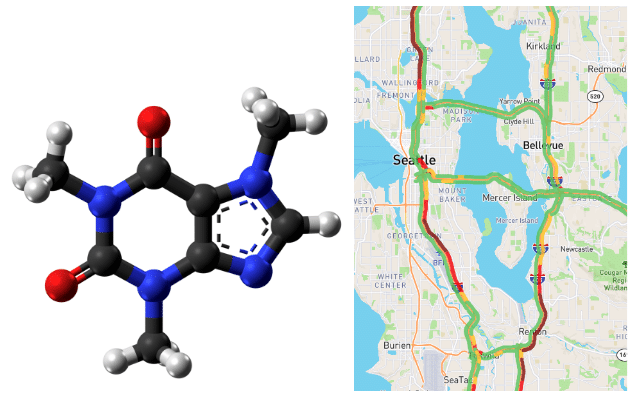

On the left, the chemical structure of caffeine (from Wikipedia), colored by chemical element. The right shows the main routes in, out, and across Seattle colored by congestion-affected speed (from iq.inrix.com). The combination of element and structure (note the rings) in caffeine keeps people awake, similarly, the ring connections and congestion keeps Seattle-DOT’s traffic-planners awake. These similarities (in structure and property) allow us to encode traffic congestion using a system like SMILES, a text-based representation of chemical structure that LLMs can understand.

Borrowing heavily from computational chemistry, we created a system of encoding traffic graphs as versions of SMILES strings. Tracking the lifecycle of congestion on thousands of archival incidents as a series of molecular-like strings combined with expert annotation we generated a corpus of training data suitable for fine-tuning an open-source multi-billion parameter model.

Of course, traffic is tricky, and we wanted to create something that could minimise mistake made. So, using a training scheme that introduced reasoning into the base model alongside our new representation of traffic. These models consider a problem internally as if mulling-over a problem, producing more reliable output on more complex problems. We fine-tuned a model to become the traffic expert almost as good as our in-house team.

The Future of AI in Traffic Intelligence

I am a big fan of innovation weeks, for lots of reasons. Sometimes we can use them to upskill in all kinds of new technical skills; or to get those nagging ideas out of your head and into silicon. On other occasions we get to work with people we don’t normally cross paths with, in parts of the business we rarely venture into. On this occasion the stars aligned for me and all those pieces fell into place. The model itself needs a bit of work, but we made a fantastic start, and proves the idea has legs.